Let's cut to the chase. The electricity consumption of AI data centers isn't just a talking point for environmentalists—it's a massive operational cost, a logistical nightmare, and the single biggest bottleneck for scaling AI. We're not talking about a few extra servers. A single large-scale AI training run, like for a frontier model, can consume more electricity than 100 US homes use in an entire year. And that's just for one training cycle. The real problem starts when you deploy that model to serve millions of queries, 24/7. The numbers are staggering, and frankly, they're unsustainable without a fundamental shift in how we think about power.

What You'll Learn in This Guide

Where All the Watts Go: Breaking Down AI Power Use

You can't fix what you don't measure. The first mistake many companies make is treating their AI cluster's power draw as a single, monolithic number. It's not. The consumption profile is split into distinct phases, each with its own demands and optimization opportunities.

1. The Training Phase: The Power Hungry Beast

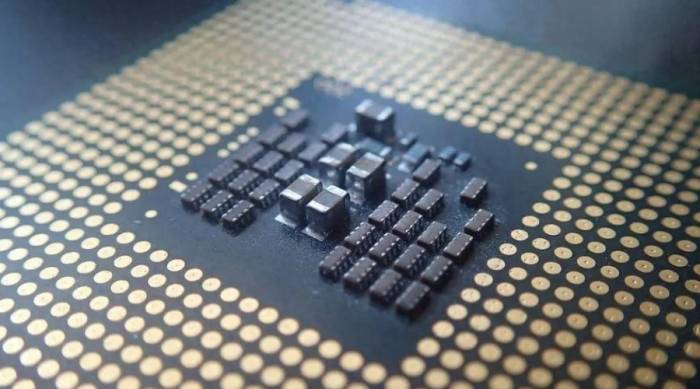

This is the celebrity of AI electricity consumption. Training a large language model involves running thousands of high-end GPUs (like NVIDIA's H100 or AMD's MI300X) at full throttle for weeks or even months. The power isn't just for computation. A huge portion, often 30-40%, is wasted as heat. I've seen setups where the auxiliary power for networking switches and storage arrays supporting the GPU cluster added another 15% overhead that no one had budgeted for.

The key metric here is Power Usage Effectiveness (PUE). A perfect PUE of 1.0 means all power goes to IT equipment. In reality, for high-density AI racks, getting below 1.5 is a challenge because the cooling demands are so intense. Many legacy facilities hover around 1.7 or higher, meaning for every watt your GPU uses, you're paying for 0.7 watts just to cool it.

2. The Inference Phase: The Silent Majority

Everyone obsesses over training power, but inference is where the real scale happens. This is the electricity used every time someone asks ChatGPT a question, gets a product recommendation, or uses an AI coding assistant. While a single query uses far less power than training, the volume is astronomically higher and continuous.

This is the phase that keeps data center operators up at night. The load is unpredictable, spiky, and must be served with low latency. You can't just turn these servers off during low demand. Poorly optimized inference can lead to a situation where you're using specialized, power-hungry training GPUs for tasks that could run on far more efficient inference-specific chips. It's like using a Formula 1 car to run daily errands—massive overkill and terrible fuel economy.

The Bottom Line: Focusing only on training efficiency is a rookie mistake. For most businesses, the lifetime inference cost will dwarf the initial training cost. Your power strategy must prioritize inference optimization from day one.

Cooling: The Hidden (and Costly) Half of the Equation

Cooling is not an afterthought; it's a primary design constraint. An AI server rack can easily draw 40-50 kilowatts, compared to 5-10 kW for a traditional server rack. That concentrated heat is a problem.

Traditional air conditioning (CRAC units) often fails here. They can't move air fast enough or cold enough through densely packed racks. The result is hot spots, throttled GPUs (which slows down your expensive training job), and even hardware failure.

The industry is moving towards more direct cooling methods:

- Liquid Cooling: This isn't science fiction anymore. Direct-to-chip cold plates or full immersion cooling are becoming standard for high-density AI deployments. I was skeptical of immersion cooling at first—substituting servers in dielectric fluid seemed messy. But the numbers are undeniable: it can reduce the cooling portion of PUE to near 1.05, cutting total facility power by 30% or more. The real benefit isn't just efficiency; it's enabling you to pack more compute into the same space without melting everything.

- Location, Location, Location: This is why you see big tech building data centers in places like Iceland, Norway, and the American Midwest. Free air cooling (using outside air) works for more days of the year. But it's a trade-off. Latency increases, and you're now dependent on a specific geographic region for your core AI infrastructure.

Real Strategies for AI Data Center Efficiency

Okay, so the problem is huge. What can you actually do about it? Throwing money at more efficient hardware is one part, but software and operational practices are just as critical.

Hardware Choices: Not All Chips Are Created Equal

The GPU arms race isn't just about raw performance (TFLOPS), it's about performance per watt. A chip that's 20% faster but uses 50% more power might be a net loss for your total cost of ownership. You need to look at the specific workloads.

| Optimization Focus | What It Means | Potential Power Saving | Trade-off / Consideration |

|---|---|---|---|

| Chip Specialization | Using inference-optimized chips (e.g., NVIDIA L4, AWS Inferentia, Google TPU) instead of general-purpose training GPUs for serving models. | 40-70% lower power per query | Requires software adaptation; less flexibility. |

| Precision Calibration | Running models in lower numerical precision (FP16, INT8) instead of FP32 after training is complete. | 30-50% less compute energy | Can slightly impact model accuracy; needs careful testing. |

| Dynamic Voltage/Frequency Scaling (DVFS) | Software that adjusts chip power and speed in real-time based on workload demand. | 10-25% at the server level | Requires deep integration with orchestration software (Kubernetes). |

| Workload Scheduling & Batching | Grouping inference requests to keep hardware utilization high, then powering down idle servers. | Major reduction in "always-on" idle power | Increases latency for some requests; complex to implement. |

The Software Layer: Where the Magic (and Savings) Happens

Hardware is dumb. It's the software that tells it what to do. A poorly written training script or an inefficient model architecture can double your electricity bill for zero gain in outcome.

I advise teams to start profiling their AI workloads with tools like NVIDIA's Nsight Systems or PyTorch Profiler. You'll often find surprising inefficiencies: data loading bottlenecks keeping GPUs idle (but still powered on), unnecessary communication between nodes, or memory transfers that chew through energy.

Model compression techniques—pruning away unused neural network connections, quantizing weights—aren't just for making models fit on phones. They directly reduce the number of calculations needed, which directly saves electricity during inference. A 20% smaller model might run 35% faster and use 40% less power.

The Future of AI Power: What's Next?

The current trajectory is impossible. If AI compute demand continues to double every few months, we'll literally run out of feasible power generation and grid capacity. The industry knows this. The next wave of innovation is being forced by the power wall.

Look for:

- Neuromorphic and Analog AI Chips: Research hardware that mimics the brain's efficiency, performing computations with much less energy. It's early, but the potential is revolutionary.

- Tighter Integration with Renewable Grids: AI data centers acting as flexible loads, scheduling non-urgent training jobs for when solar or wind power is abundant. Some are even exploring on-site generation, like small modular reactors (SMRs), though that's a political and regulatory minefield.

- The Rise of "Carbon-Aware Computing": APIs and tools that let developers make trade-offs between model performance, speed, and estimated carbon footprint. Choosing a slightly less accurate model that's vastly more efficient could become a standard ethical and economic choice.

The companies that win the AI race won't just be the ones with the smartest algorithms. They'll be the ones with the most efficient joules.

Comments

Share your experience