Let's cut to the chase. The short answer is a cautious yes, but it's complicated. The narrative that AI is becoming more energy efficient isn't a simple story of linear progress. It's a fierce, multi-front battle between the explosive growth in model size and compute demand, and the innovative hardware, software, and architectural leaps trying to rein it in. Think of it as a high-stakes efficiency race where the finish line keeps moving. The real question isn't just "is it happening?" but "where is it happening, how fast, and is it enough?"

What You'll Find in This Guide

The Drivers Behind AI's Energy Efficiency Push

This isn't just about being green for PR points. The push for energy efficient AI models is driven by hard economics and practical limits.

Cost. Training a single massive model like GPT-3 was estimated to cost millions in electricity alone. For companies deploying AI at scale—think Google Search, Meta's content recommendations, or Tesla's self-driving—shaving off even a few percentage points in power consumption translates to tens of millions in annual savings. Efficiency is now a core competitive advantage.

Physical and Environmental Limits. Data centers are hitting power capacity walls. The chip industry is bumping against the limits of Moore's Law. The environmental footprint is becoming a tangible business risk and a source of public scrutiny. The Stanford AI Index Report has consistently highlighted the soaring energy demands of training state-of-the-art models. This external pressure is forcing a rethink.

Democratization and Edge AI. We want powerful AI not just in cloud data centers, but in our phones, cars, and IoT devices. These environments have stringent power and thermal budgets. An energy-hungry model is a non-starter for a smartphone feature that needs to run all day.

Hardware Innovations: The Foundation of Efficiency

This is where some of the most tangible gains are happening. It's not just about faster chips, but chips designed from the ground up for AI workloads.

Specialized AI Accelerators

General-purpose CPUs are terrible at AI math. Companies like Nvidia, Google, AMD, and a host of startups are building processors specifically for the matrix multiplications at AI's core.

- Nvidia's Hopper and Blackwell Architectures: These aren't just GPUs anymore; they're full-stack computing platforms. They integrate dedicated Transformer engines (the architecture behind ChatGPT) and use advanced packaging to move data between processor and memory with far less energy. Nvidia claims its newer chips deliver orders of magnitude better performance-per-watt for AI training compared to just a few generations ago.

- Google's TPU (Tensor Processing Unit): A custom chip built solely to run Google's TensorFlow framework. By designing the hardware and software in tandem, they achieve much higher efficiency for their specific AI tasks. The latest TPU v5e is touted for its cost and energy efficiency for inference (running trained models).

Here’s a simplified look at how specialization changes the game:

| Processor Type | Best For | Energy Efficiency for AI | Key Trade-off |

|---|---|---|---|

| General CPU (e.g., Intel Xeon) | Versatile computing tasks | Low | Flexibility |

| General GPU (e.g., older Nvidia) | Parallel processing, graphics | Medium | Good balance for development |

| Specialized AI Accelerator (e.g., Nvidia H100, Google TPU) | AI training & inference | Very High | Cost, less flexibility for non-AI tasks |

Advanced Memory and Chip Design

One of the biggest energy drains in computing isn't the calculation itself, but moving data to and from memory. Innovations like High-Bandwidth Memory (HBM) stacked right on the processor die, and chiplet designs that connect specialized blocks, drastically reduce this data-movement penalty. Less energy spent moving bits means more energy can be spent on useful computation.

Software & Algorithms: Doing More with Less

Hardware gets the headlines, but software optimizations are the silent workhorses of AI energy efficiency. You can have the most efficient chip in the world, and a poorly written algorithm will waste it.

A common misconception I see: engineers assume throwing more efficient hardware at a problem is the solution. Often, the lowest-hanging fruit is in the code and model design itself.

Model Compression Techniques: This is a whole subfield dedicated to shrinking models without killing their performance.

- Pruning: Cutting out redundant or insignificant neurons/weights from a neural network. It's like removing unused wiring from a circuit board.

- Quantization: Reducing the numerical precision of the model's calculations. Instead of using 32-bit floating-point numbers, can we use 16-bit, 8-bit, or even 4-bit integers? This reduces memory footprint and compute energy dramatically. Many smartphone AI features rely on heavily quantized models.

- Knowledge Distillation: Training a small, efficient "student" model to mimic the behavior of a large, powerful "teacher" model. The student learns the teacher's wisdom without its bulk.

Efficient Neural Architecture Search (NAS): Instead of humans manually designing model architectures, we use AI to search for the most efficient design for a given task and hardware constraint. It's like having an AI architect that optimizes for both accuracy and power consumption.

Architectural Shifts: Rethinking How AI Works

Beyond tweaking existing models, there's a push to invent fundamentally more efficient architectures. The Transformer model that powers today's LLMs is powerful but notoriously compute-hungry.

Researchers are exploring alternatives like State Space Models (e.g., Mamba), which promise similar performance with linear scaling in compute relative to sequence length, unlike the quadratic scaling of Transformers. If these pan out, they could be a game-changer for long-context tasks.

Another shift is the rise of Mixture of Experts (MoE) models. Instead of activating a trillion-parameter model for every query, an MoE model has many smaller "expert" sub-networks. A routing network activates only the relevant experts for a given input. This means a massive model in terms of total parameters, but a much smaller, more energy-efficient model in terms of active parameters per task.

Then there's the pragmatic move towards smaller, domain-specific models. The industry's obsession with giant, general-purpose LLMs is giving way to a realization: a finely-tuned 7-billion parameter model can often outperform a bloated generic one for a specific job, using a fraction of the energy. This is a crucial trend for enterprise adoption.

Measuring the Real-World Impact

So, with all this innovation, are we seeing a net positive trend? Evidence suggests yes, but with major caveats.

Studies like those from researchers at MLCommons show that for standardized benchmarks, the performance-per-watt of AI systems has been improving significantly year-over-year. A task that took 100 joules of energy on 2020 hardware might take only 30 joules on 2023 hardware.

However—and this is a big however—the absolute energy consumption of the AI field is still growing. Why? Because the rate of deployment and the ambition of models are exploding even faster than efficiency gains. It's like improving a car's fuel efficiency by 50% but then driving ten times more miles. The total fuel use still goes up.

My own observation from talking to data center operators is that the efficiency gains are real and desperately needed just to keep their power bills and cooling systems manageable. They're not saving energy in an absolute sense; they're using that efficiency to cram more AI compute into the same power envelope.

The Significant Challenges Ahead

The path to truly sustainable AI is fraught with obstacles.

The Jevons Paradox: This economic principle states that as something becomes more efficient and cheaper to use, its total consumption can increase. More efficient AI could simply lead to us deploying it in more frivolous, widespread ways, negating the environmental benefit.

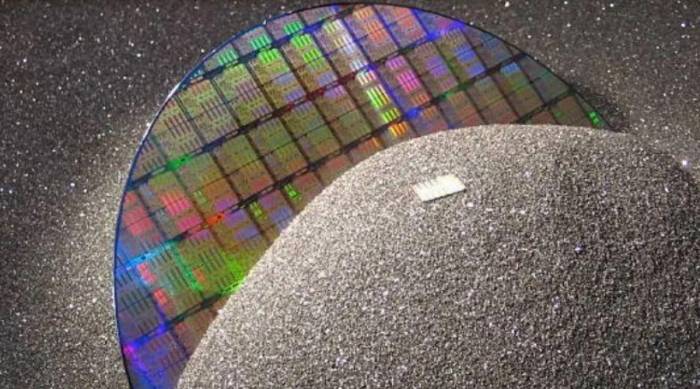

Hardware's Physical Limits: While specialized chips are great, their design and manufacturing are incredibly energy-intensive. The carbon debt from building a new chip fab isn't trivial. And we are approaching atomic-scale limits on how much smaller and more efficient silicon transistors can get.

The Carbon Source of Electricity: An AI model running on a grid powered by renewables is far greener than one running on coal. The push for AI energy efficiency must go hand-in-hand with the global transition to clean energy. Location matters.

Lack of Standardized Metrics: It's still surprisingly hard to get a clear, apples-to-apples comparison of the energy footprint of different AI models or services. The community needs better tools and transparency.

Comments

Share your experience